today-AI-learned

(five minute hack-and-tell version)

The goal

Train a machine to find

new & interesting things

Supervised learning

r/TIL, a subreddit short for Today I Learned

Keep only Wikipedia data

Filter for consistent writing style...

Data collection

- Download all popular posts with

score>1000for 2013 and 2014 (~5000) - Download Wikipedia

- Cross-reference each post to the correct Wikipedia paragraph

- Built True positives (known TIL's)

- Built Decoys (other paragraphs in TIL's)

- Built unknown samples (rest of Wikipedia*)

Use all the tools!

Project uses modulessqlite3, requests, bs4, pandas, numpy, scikit-learn, gensim, praw, wikipedia, nltk, stemmming.porter2

Data Wrangling

Tokenize

>> "Good muffins cost $3.88\n in New York"

['Good', 'muffins', 'cost', 'TOKEN_MONEY', 'in', 'New', 'York', 'TOKEN_EOS']

Remove "stop words"

>> "I sat on the rock"

['I', 'sat', 'on', 'rock']

Stem words

>> stem("factionally")

'faction'

"Entropy" vectors

counts the uniqueness of each word to the rest of the entry,local

TF-IDF (term frequency-inverse document frequency)

Feature generation

Used Word2Vec (developed by Google), weighted by local articleTF-IDF

>>> model.most_similar(positive=['woman', 'king'], negative=['man'])

[('queen', 0.50882536), ...]

>>> model.doesnt_match("breakfast cereal dinner lunch".split())

'cereal'

>>> model.similarity('woman', 'man')

0.73723527

>>> model['computer'] # raw numpy vector of a word

array([-0.00449447, -0.00310097, 0.02421786, ...], dtype=float32)Uses far fewer features to store relationships between words!

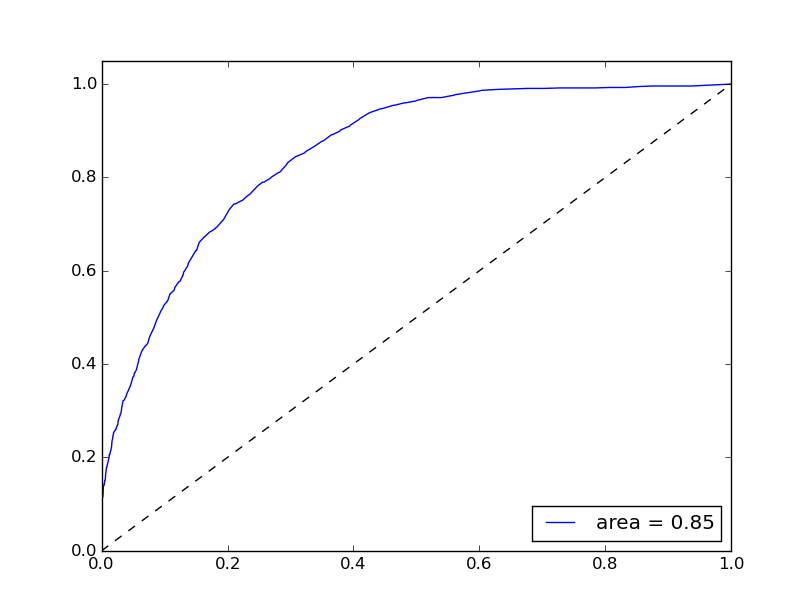

Modeling training

Used Extremely Random Trees, variant of Random Tree classifier.Training classifier

Test Accuracy: 0.878; Test Accuracy on TP: 0.116; Test Accuracy on TN: 0.998

Does it work?

Yes! (Examples incoming). Still requires a human to construct titles and filter. Mistakenly concentrates on movie & book plot summaries (if they happened in real life they would be exciting!).Does it pass the Turning test?

shhhhh.... secretly submitting to reddit soon

Examples!

Bubble wrap

"Bubble wrap" is a generic trademark owned by Sealed Air Corporation. In 1957 two inventors named Alfred Fielding and Marc Chavannes were attempting to create a three-dimensional plastic wallpaper. Although the idea was a failure, they found that what they did make could be used as packing material. Sealed Air Corp. was co-founded by Alfred Fielding.

Examples!

DineEquity

Julia Stewart, who originally worked as a waitress at IHOP and worked her way up through the restaurant industry, became Chief Executive Officer of IHOP Corporation. She had previously been President of Applebee’s, but left after being overlooked for that company's CEO position. She became CEO of IHOP in 2001, and returned to manage her old company due to the acquisition.

Examples!

George R. R. Martin

Martin is opposed to fan fiction, believing it to be copyright infringement and a bad exercise for aspiring writers.

Examples!

Andy Kaufman

At Thanksgiving dinner on Long Island, New York, in November 1983, several family members openly expressed worry about Kaufman's persistent coughing. He claimed that he had been coughing for nearly a month, visited his doctor, and been told that nothing was wrong. When he returned to Los Angeles, he consulted a physician, then checked himself into Cedars-Sinai Hospital for a series of medical tests. A few days later, he was diagnosed with an extremely rare type of lung cancer. Though Kaufman almost never smoked cigarettes, it was speculated by his doctors that he may have developed lung cancer from repeated exposure to second-hand smoke while performing in nightclubs where smoking was permitted during that period.

Thanks you!

Looking for an overpowered scientist turned analyst/developer? Let's talk!

travis.hoppe @ gmail.com